Data Preprocessing

The size of the images was reduced to be 128x128 causing them to have exploding pixels. And all the images were converted from the RGB channel to Grayscale.

X_b, y_b = np.zeros((437, 128, 128, 1)), np.zeros((437, 128, 128, 1))

X_n, y_n = np.zeros((133, 128, 128, 1)), np.zeros((133, 128, 128, 1))

X_m, y_m = np.zeros((210, 128, 128, 1)), np.zeros((210, 128, 128, 1))

for i, tumor_type in enumerate(os.listdir(path)) :

for image in os.listdir(path+tumor_type+'/') :

p = os.path.join(path+tumor_type, image)

img = cv2.imread(p,cv2.IMREAD_GRAYSCALE) # read image as grayscale

if image[-5] == ')' :

img = cv2.resize(img,(128,128))

pil_img = Image.fromarray (img)

if image[0] == 'b' :

X_b[num(image)-1]+= img_to_array(pil_img) # If image is real add it

if image[0] == 'n' : # to X as benign , normal

X_n[num(image)-1]+= img_to_array(pil_img) # or malignant.

if image[0] == 'm' :

X_m[num(image)-1]+= img_to_array(pil_img)

else :

img = cv2.resize(img,(128,128))

pil_img = Image.fromarray (img)

if image[0] == 'b' :

y_b[num(image)-1]+= img_to_array(pil_img) # Similarly add the target

if image[0] == 'n' : # mask to y.

y_n[num(image)-1]+= img_to_array(pil_img)

if image[0] == 'm' :

y_m[num(image)-1]+= img_to_array(pil_img)

def num (image) :

val = 0

for i in range(len(image)) :

if image[i] == '(' :

while True :

i += 1

if image[i] == ')' :

break

val = (val*10) + int(image[i])

break

return val

Model

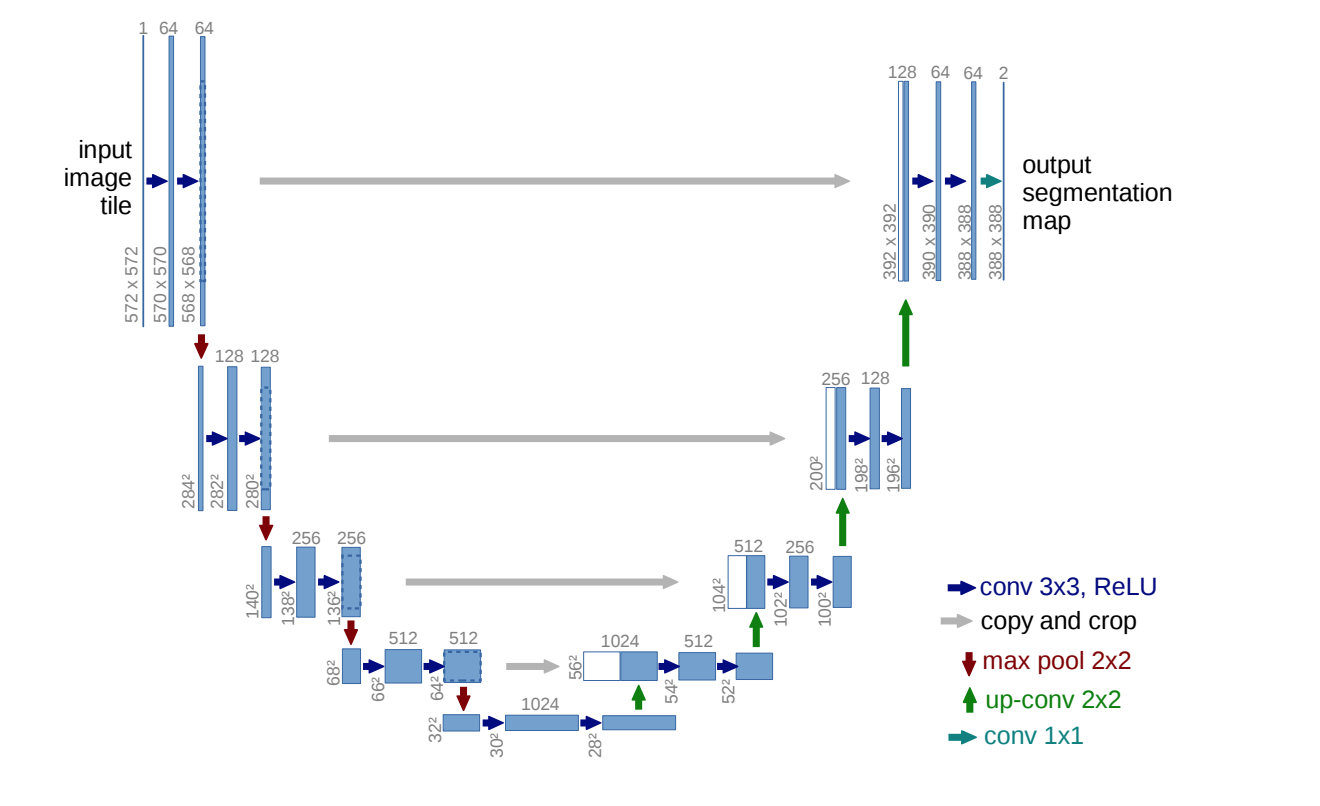

U-net - The model is an encoder-decoder architecture, which takes in an image as input and outputs an image. The encoder path downsamples the image till the bottleneck layer, and from bottleneck layer the decoder path upsamples the image till the output layer. The gist behind the model is that in the contracting path the model learns what are the important features in the image and in the expanding path it learns where these features are located in the image.

Contracting path - This path consists of double convolution layers with a stride of 2 which downsamples the image to half the prior size. So, the size decreases and the depth of the image (channel) increases till the bottleneck layer.

inply = Input((128, 128, 1,))

conv1 = Conv2D(2**6, (3,3), activation = 'relu', padding = 'same')(inply)

conv1 = Conv2D(2**6, (3,3), activation = 'relu', padding = 'same')(conv1)

pool1 = MaxPooling2D((2,2), strides = 2, padding = 'same')(conv1)

drop1 = Dropout(0.2)(pool1)

conv2 = Conv2D(2**7, (3,3), activation = 'relu', padding = 'same')(drop1)

conv2 = Conv2D(2**7, (3,3), activation = 'relu', padding = 'same')(conv2)

pool2 = MaxPooling2D((2,2), strides = 2, padding = 'same')(conv2)

drop2 = Dropout(0.2)(pool2)

conv3 = Conv2D(2**8, (3,3), activation = 'relu', padding = 'same')(drop2)

conv3 = Conv2D(2**8, (3,3), activation = 'relu', padding = 'same')(conv3)

pool3 = MaxPooling2D((2,2), strides = 2, padding = 'same')(conv3)

drop3 = Dropout(0.2)(pool3)

conv4 = Conv2D(2**9, (3,3), activation = 'relu', padding = 'same')(drop3)

conv4 = Conv2D(2**9, (3,3), activation = 'relu', padding = 'same')(conv4)

pool4 = MaxPooling2D((2,2), strides = 2, padding = 'same')(conv4)

drop4 = Dropout(0.2)(pool4)

Bottleneck layer - This is the lowest layer in the architecture, with maximum number of channels and minimum size (28x28).

convm = Conv2D(2**10, (3,3), activation = 'relu', padding = 'same')(drop4)

convm = Conv2D(2**10, (3,3), activation = 'relu', padding = 'same')(convm)

Expanding path - Starting from the bottleneck layer the image is upsampled using transpose convolution layers, which keeps on doubling the size of the image and halves the number of channels. After the decoder layers, another convolution layer is added to convert the image to have the appropriate number of channels.

tran5 = Conv2DTranspose(2**9, (2,2), strides = 2, padding = 'valid', activation = 'relu')(convm)

conc5 = Concatenate()([tran5, conv4])

conv5 = Conv2D(2**9, (3,3), activation = 'relu', padding = 'same')(conc5)

conv5 = Conv2D(2**9, (3,3), activation = 'relu', padding = 'same')(conv5)

drop5 = Dropout(0.1)(conv5)

tran6 = Conv2DTranspose(2**8, (2,2), strides = 2, padding = 'valid', activation = 'relu')(drop5)

conc6 = Concatenate()([tran6, conv3])

conv6 = Conv2D(2**8, (3,3), activation = 'relu', padding = 'same')(conc6)

conv6 = Conv2D(2**8, (3,3), activation = 'relu', padding = 'same')(conv6)

drop6 = Dropout(0.1)(conv6)

tran7 = Conv2DTranspose(2**7, (2,2), strides = 2, padding = 'valid', activation = 'relu')(drop6)

conc7 = Concatenate()([tran7, conv2])

conv7 = Conv2D(2**7, (3,3), activation = 'relu', padding = 'same')(conc7)

conv7 = Conv2D(2**7, (3,3), activation = 'relu', padding = 'same')(conv7)

drop7 = Dropout(0.1)(conv7)

tran8 = Conv2DTranspose(2**6, (2,2), strides = 2, padding = 'valid', activation = 'relu')(drop7)

conc8 = Concatenate()([tran8, conv1])

conv8 = Conv2D(2**6, (3,3), activation = 'relu', padding = 'same')(conc8)

conv8 = Conv2D(2**6, (3,3), activation = 'relu', padding = 'same')(conv8)

drop8 = Dropout(0.1)(conv8)

Loss functions

Examples